One way of getting insight into non-Gaussian measures is to first obtain good Gaussian approximations. These best fit Gaussians can then provide a sense of the mean and variance of the distribution of interest. They can also be used to accelerate sampling algorithms. This begs the question of how one should measure optimality, and how to then obtain this optimal approximation. Here, we consider the problem of minimizing the distance between a family of Gaussians and the target measure with respect to relative entropy, or Kullback-Leibler divergence. As we are interested in applications in the infinite dimensional setting, it is desirable to have convergent algorithms that are well posed on abstract Hilbert spaces. We examine this minimization problem by seeking roots of the first variation of relative entropy, taken with respect to the mean of the Gaussian, leaving the covariance fixed. We prove the convergence of Robbins-Monro type root finding algorithms in this context, highlighting the assumptions necessary for convergence to relative entropy minimizers. Numerical examples are included to illustrate the algorithms.

1.

Introduction

In [13,14,15,16,19], it was proposed that insight into a probability distribution, μ, posed on a Hilbert space, H, could be obtained by finding a best fit Gaussian approximation, ν. This notion of best, or optimal, was with respect to the relative entropy, or Kullback-Leibler divergence:

Having a Gaussian approximation provides qualitative insight into μ, as it provides a concrete notion of the mean and variance of the distribution. Additionally, this optimized distribution can be used in algorithms, such as random walk Metropolis, as a preconditioned proposal distribution to improve performance. Such a strategy can benefit a number of applications, including path space sampling for molecular dynamics and parameter estimation in statistical inverse problems.

Observe that in the definition of R, (1.1), there is an asymmetry in the arguments. Were we to work with R(μ||ν), our optimal Gaussian would capture the first and second moments of μ, and in some applications this is desirable. However, for a multimodal problem (consider a distribution with two well separated modes), this would be inadequate; our form attempts to match individual modes of the distribution by a Gaussian. For a recent review of the R(ν||μ) problem, see [4], where it is remarked that this choice of arguments is likely to underestimate the dispersion of the distribution of interest, μ. The other ordering of arguments has been explored, in the finite dimensional case, in [2,3,10,18].

To be of computational use, it is necessary to have an algorithm that will converge to this optimal distribution. In [15], this was accomplished by first expressing ν=N(m,C(p)), where m is the mean and p is a parameter inducing a well defined covariance operator, and then solving the problem,

over an admissible set. The optimization step itself was done using the Robbins-Monro algorithm (RM), [17], by seeking a root of the first variation of the relative entropy. While the numerical results of [15] were satisfactory, being consistent with theoretical expectations, no rigorous justification for the application of RM to the examples was given.

In this work, we emphasize the study and application of RM to potentially infinite dimensional problems. Indeed, following the framework of [15,16], we assume that μ is posed on the Borel σ-algebra of a separable Hilbert space (H,⟨∙,∙⟩,‖∙‖). For simplicity, we will leave the covariance operator C fixed, and only optimize over the mean, m. Even in this case, we are seeking m∈H, a potentially infinite-dimensional space.

1.1. Robbins-Monro

Given the objective function f:H→H, assume that it has a root, x⋆. In our application to relative entropy, f will be its first variation. Further, we assume that we can only observe a noisy version of f, F:H×χ→H, such that for all x∈H,

where μZ is the distribution associated with the random variable (r.v.) Z, taking values in the auxiliary space χ. The naive Robbins-Monro algorithm is given by

where Zn∼μZ, are independent and identically distributed (i.i.d.), and an>0 is a carefully chosen sequence. Subject to assumptions on f, F, and the distribution μZ, it is known that Xn will converge to x⋆ almost surely (a.s.), in finite dimensions, [5,6,17]. Often, one needs to assume that f grows at most linearly,

in order to apply the results in the aforementioned papers. The analysis in the finite dimensional case has been refined tremendously over the years, including an analysis based on continuous dynamical systems. We refer the reader to the books [1,8,11] and references therein.

1.2. Trust regions and truncations

As noted, much of the analysis requires the regression function f to have, at most, linear growth. Alternatively, an a priori assumption is sometimes made that the entire sequence generated by (1.4) stays in a bounded set. Both assumptions are limiting, though, in practice, one may find that the algorithms converge.

One way of overcoming these assumptions, while still ensuring convergence, is to introduce trust regions that the sequence {Xn} is permitted to explore, along with a "truncation" which enforces the constraint. Such truncations distort (1.4) into

where Pn+1 is the projection keeping the sequence {Xn} within the trust region. Projection algorithms are also discussed in [1,8,11].

We consider RM on a possibly infinite dimensional separable Hilbert space. This is of particular interest as, in the context of relative entropy optimization, we may be seeking a distribution in a Sobolev space associated with a PDE model. A general analysis of RM with truncations in Hilbert spaces can be found in [20]. The main purpose of this work is to adapt the analysis of [12] to the Hilbert space setting for two versions of the truncated problem. The motivation for this is that the analysis of [12] is quite straightforward, and it is instructive to see how it can be easily adapted to the infinite dimensional setting. The key modification in the proof is that results for Banach space valued martingales must be invoked. We also adapt the results to a version of the algorithm where there is prior knowledge on the location of the root. With these results in hand, we can then verify that the relative entropy minimization problem can be solved using RM.

1.2.1. Fixed trust regions

In some problems, one may have a priori information on the root. For instance, we may know that x⋆∈U1, some open bounded set. In this version of the truncated algorithm, we have two open bounded sets, U0⊊U1, and x⋆∈U1. Let σ0=0 and X0∈U0 be given, then (1.6) can be formulated as

We interpret ˜Xn+1 as the proposed move, which is either accepted or rejected depending on whether or not it will remain in the trust region. If it is rejected, the algorithm restarts at X(σn)0∈U0. The restart points, {X(σn)0}, may be random, or it may be that X(σn)0=X0 is fixed. The essential property is that the algorithm will restart in the interior of the trust region, away from its boundary. The r.v. σn counts the number of times a truncation has occurred. Algorithm (1.7) can now be expressed as

1.2.2. Expanding trust regions

In the second version of truncated Robbins-Monro, define the sequence of open bounded sets, Un such that:

Again, letting X0∈U0, σ0=0, the algorithm is

A consequence of this formulation is that Xn∈Uσn for all n. As before, the restart points may be random or fixed, and they are in U0. This would appear superior to the fixed trust region algorithm, as it does not require knowledge of the sets. However, to guarantee convergence, global (in H) assumptions on the regression function are required; see Assumption 2 below. (1.10) can written with Pn+1 as

1.3. Outline

In Section 2, we state sufficient assumptions for which we are able to prove convergence in both the fixed and expanding trust region problems, and we also establish some preliminary results. In Section 3, we focus on the relative entropy minimization problem, and identify what assumptions must hold for convergence to be guaranteed. Examples are then presented in Section 4, and we conclude with remarks in Section 5.

2.

Convergence of Robbins-Monro

We first reformulate (1.8) and (1.15) in the more general form

where δMn+1, the noise term, is

A natural filtration for this problem is Fn=σ(X0,Z1,…,Zn). Xn is Fn measurable and the noise term can be expressed in terms of the filtration as δMn+1=F(Xn,Zn+1)−E[F(Xn,Zn+1)∣Fn].

We now state our main assumptions:

Assumption 1. f has a zero, x⋆. In the case of the fixed trust region problem, there exist R0<R1 such that

In the case of the expanding trust region problem, the open sets are defined as Un=Brn(0) with

These sets clearly satisfy (1.9).

Assumption 2. For any 0<a<A, there exists δ>0:

In the case of the fixed truncation, this inequality is restricted to x∈U1. This is akin to a convexity condition on a functional F with f=DF.

Assumption 3. x↦E[‖F(x,Z)‖2] is bounded on bounded sets, with the restriction to U1 in the case of fixed trust regions.

Assumption 4. an>0, ∑an=∞, and ∑a2n<∞

Theorem 2.1. Under the above assumptions, for the fixed trust region problem, Xn→x⋆ a.s. and σn is a.s. finite.

Theorem 2.2. Under the above assumptions, for the expanding trust region problem, Xn→x⋆ a.s. and σn is a.s. finite.

Note the distinction between the assumptions in the two algorithms. In the fixed truncation algorithm, Assumptions 2 and 3 need only hold in the set U1, while in the expanding truncation algorithm, they must hold in all of H. While this would seem to be a weaker condition, it requires identification of the sets U0 and U1 for which the assumptions hold. Such sets may not be readily identifiable, as we will see in our examples.

We first need some additional information about f and the noise sequence δMn.

Lemma 2.1. Under Assumption 3, f is bounded on U1, for the fixed trust region problem, and on arbitrary bounded sets, for the expanding trust region problem.

Proof. Trivially,

and the results follows from the assumption.

Proposition 2.1. For the fixed trust region problem, let

Alternatively, in the expanding trust region problem, for r>0, let

Under Assumptions 3 and 4, Mn is a martingale, converging in H, a.s.

Proof. The following argument holds in both the fixed and expanding trust region problems, with appropriate modifications. We present the expanding trust region case. The proof is broken up into 3 steps:

1. Relying on Theorem 6 of [7] for Banach space valued martingales, it will be sufficient to show that Mn is a martingale, uniformly bounded in L1(P).

2. In the case of the expanding truncations,

Since both of these terms are bounded, independently of i, by Assumption 3 and Lemma 1, this is finite.

3. Next, since {δMi1‖Xi−1−x⋆‖≤r} is a martingale difference sequence, we can use the above estimate to obtain the uniform L2(P) bound,

Uniform boundedness in L2, gives boundedness in L1, and this implies a.s. convergence in H.

2.1. Finite truncations

In this section we prove results showing that only finitely many truncations will occur, in either the fixed or expanding trust region case. Recall that when a truncation occurs, the equivalent conditions hold: Pn+1≠0; σn+1=σn+1; and ˜Xn+1∉U1 in the fixed trust region algorithm, while ˜Xn+1∉Uσn in the expanding trust region case.

Lemma 2.2. In the fixed trust region algorithm, if Assumptions 1, 2, 3, and 4 hold, then the number of truncations is a.s. finite; a.s., there exists N, such that for all n≥N, σn=σN.

Proof. We break the proof up into 7 steps:

1. Pick ρ and ρ′ such that

Let ˉf=sup‖f(x)‖, with the supremum over U1; this bound exists by Lemma 1. Under Assumption 2, there exists δ>0 such that

Having fixed ρ, ρ′, ˉf, and δ, take ϵ>0 such that:

Having fixed such an ϵ, by the assumptions of this lemma and Proposition 1, a.s., there exists nϵ such that for any n,m≥nϵ, both

2. Define the auxiliary sequence

Using (2.1), we can then write

By (2.7), for any n≥nϵ,

3. We will show X′n∈Bρ′(x⋆) for all n large enough. The significance of this is that if n≥nϵ, and X′n∈Bρ′(x⋆), then no truncation occurs. Indeed, using (2.6)

Consequently, Pn+1=0, Xn+1=˜Xn+1, and σn+1=σn. Thus, establishing X′n∈Bρ′(x⋆) will yield the result.

4. Let

This corresponds to the the first truncation after nϵ. If the above set is empty, for that realization, no truncations occur after nϵ, and we are done. In such a case, we may take N=nϵ in the statement of the lemma.

5. We now prove by induction that in the case that (2.12) is finite, X′n∈Bρ′(x⋆) for all n≥N. First, note that XN∈BR0(x⋆)⊂Bρ(x⋆). By (2.6) and (2.10),

Next, assume X′N,X′N+1,…,X′n are all in Bρ′(x⋆). Using (2.11), we have that PN+1=…=Pn+1=0 and σN=…=σn=σn+1. Therefore,

We now consider two cases of (2.13) to conclude ‖X′n+1−x⋆‖<ρ′.

6. In the first case, ‖X′n−x⋆‖≤R0. By Cauchy-Schwarz and (2.6)

In the second case, R0<‖X′n−x⋆‖<ρ′. Dissecting the inner product term in (2.13) and using Assumption 2 and (2.10),

Conditions (2.6) and (2.10) yield the following upper and lower bounds:

Therefore, (2.5) applies and ⟨Xn−x⋆,f(Xn)⟩≥δ. Using this in (2.14), and condition (2.6),

Substituting this last estimate back into (2.13), and using (2.6),

This completes the inductive step.

7. Since the auxiliary sequence remains in Bρ′(x⋆) for all n≥N>nϵ, (2.11) ensures ˜Xn+1∈BR1(x⋆), Pn+1=0, and σn+1=σN, a.s.

To obtain a similar result for the expanding trust region problem, we first relate the finiteness of the number of truncations with the sequence persisting in a bounded set.

Lemma 2.3. In the expanding trust region algorithm, if Assumptions 1, 3, and 4 hold, then the sequence remains in a set of the form BR(0) for some R>0 if and only if the number of truncations is finite, a.s.

Proof. We break this proof into 4 steps:

1. If the number of truncations is finite, then there exists N such that for all n≥N, σn=σN. Consequently, the proposed moves are always accepted, and Xn∈Uσn=UσN for all n≥N. Since Xn∈Uσn⊂UσN for n<N, Xn∈UσN for all n. By Assumption 3, BR(0)=BrσN(0)=UσN is the desired set.

2. For the other direction, assume that there exists R>0 such that Xn∈BR(0) for all n. Since the rn in (2.3) tend to infinity, there exists N1, such that R<R+1<rN1. Hence, for all n≥N1,

Let ˉf=sup‖f(x)‖, with the supremum over BR(0). Let ˜R be sufficiently large such that BR+1(0)⊂B˜R(x⋆). Lastly, using Proposition 1 and Assumption 4, a.s., there exists N2, such that for all n≥N2

Since Xn∈BR(0)⊂B˜R(x⋆), the indicator function in (2.16) is always one, and ‖anδMn‖<1/2.

3. Next, let

If the above set is empty, then σn<max{N1,N2} for all n, and the number of truncations is a.s. finite. In this case, the proof is complete.

4. If the set in (2.17) is not empy, then N<∞. Take n≥N. As Xn∈BR(0), and since n≥σn≥max{N1,N2}, (2.16) applies. Therefore,

Thus, ˜Xn+1∈BR+1(0)⊂UN1, σn≥N1, and UN1⊂Uσn. Therefore, ˜Xn+1∈Uσn. No truncation occurs, and σn=σn+1. Since this holds for all n≥N, σn=σN, and the number of truncations is a.s. finite.

Next, we establish that, subject to an additional assumption, the sequence remains in a bounded set; the finiteness of the truncations is then a corollary.

Lemma 2.4. In the expanding trust region algorithm, if Assumptions 1, 2, 3, and 4 hold, and for any r>0, there a.s. exists N<∞, such that for all n≥N,

then {Xn} remains in a bounded open set, a.s.

Proof. We break this proof into 7 steps:

1. We begin by setting some constants for the rest of the proof. Fix R>0 sufficiently large such that BR(x⋆)⊃U0. Next, let ˉf=sup‖f(x)‖ with the supremum taken over BR+2(x⋆). Assumption 2 ensures there exists δ>0 such that

Having fixed R, ˉf, and δ, take ϵ>0 such that:

By the assumptions of this lemma and Proposition 1 there exists, a.s., nϵ≥N such that for all n≥nϵ,

2. Define the modified sequence for n≥nϵ as

Using (2.1), we have the iteration

3. Let

the first time after nϵ that a truncation occurs.

If the above set is empty, no truncations occur after nϵ. In this case, σn=σnϵ≤nϵ<∞ for all n≥nϵ. Therefore, for all n≥nϵ, Xn∈Uσn⊂Uσnϵ. Since Uσn⊂Uσnϵ for all n<nϵ too, the proof is complete in this case.

4. Now assume that N<∞. We will show that {X′n} remains in BR+1(x⋆) for all n≥N. Were this to hold, then for n≥N,

having used (2.21) and (2.22). For n<N, Xn∈Uσn⊂UσN=BrN(0). Therefore, for all n, Xn∈B˜R(0) where ˜R=max{rN,‖x⋆‖+R+2}.

5. We prove X′n∈BR+1(x⋆) by induction. First, since ϵ<1 and XN∈U0⊂BR(x⋆),

Next, assume that X′N,X′N+1,…,X′n are all in BR+1(x⋆). By (2.25), Xn∈BR+2(x⋆). Since Pn+11‖Xn−x⋆‖≤R+2=0, we conclude Pn+1=0. The modified iteration (2.23) simplifies to have

and

6. We now consider two cases of (2.26). First, assume ‖X′n−x⋆‖≤R. Then (2.26) can immediately be bounded as

where we have used condition (2.20) in the last inequality.

7. Now consider the case R<‖X′n−x⋆‖<R+1. Using (2.20), the inner product in (2.26) can first be bounded from below:

Next, using (2.20)

Therefore, 12R<‖Xn−x⋆‖<R+2, so (2.19) ensures ⟨Xn−x⋆,f(Xn)⟩≥δ and

Returning to (2.26), by (2.20),

This completes the proof of the inductive step in this second case, completing the proof.

Corollary 2.1. For the expanding trust region algorithm, if Assumptions 1, 2, 3, and 4 hold, then the number of truncations is a.s. finite.

Proof. The proof is by contradiction. We break the proof into 4 steps:

1. Assuming that there are infinitely many truncations, Lemma 3 implies that the sequence cannot remain in a bounded set. Then, continuing to assume that Assumptions 1, 2, 3, and 4 hold, the only way for the conclusion of Lemma 4 to fail is if the assumption on Pn+11‖Xn−x⋆‖≤r is false. Therefore, there exists r>0 and a set of positive measure on which a subsequence, Pnk+11‖Xnk−x⋆‖≤r≠0. Hence Xnk∈Br(x⋆), and Pnk+1≠0. So truncations occur at these indices, and ˜Xnk+1∉Uσnk.

2. Let ˉf=sup‖f(x)‖ with the supremum over the set Br(x⋆) and let ϵ>0 satisfy

By our assumptions of the lemma and Proposition 1, there exists nϵ such that for all n≥nϵ

Along the subsequence, for all nk≥nϵ,

3. Furthermore, for nk≥nϵ:

where (2.27) has been used in the last inequality.

4. By the definition of the Un, there exists an index M such that UM⊃Br+1(x⋆). Let

This set is nonempty and N<∞ since we have assumed there are infinitely many truncations. Let nk≥N. Then σnk≥M and Uσnk⊃Br+1(x⋆). But (2.30) then implies that ˜Xnk+1∈Uσnk, and no truncation will occur; Pnk+1=0, providing the desired the contradiction.

2.2. Proof of convergence

Using the above results, we are able to prove Theorems 2.1 and 2.2. Since the proofs are quite similar, we present the more complicated expanding trust region case.

Proof. We split this proof into 6 steps:

1. First, by Corollary 1, only finitely many truncations occur. By Lemma 3, there exists R>0 such that Xn∈BR(0) for all n. Consequently, there is an r such that Xn∈Br(x⋆) for all n.

2. Next, we fix constants. Let ˉf=sup‖f(x)‖ with the supremum taken over Br(x⋆). Fix η∈(0,2R), and use Assumption 2 to determine δ>0 such that

Take ϵ>0 such that:

Having set ϵ, we again appeal to Assumption 4 and Proposition 1 to find nϵ such that for all n≥nϵ:

3. Define the auxiliary sequence,

Since there are only finitely many truncations, there exists N≥nϵ, such that for all n≥N, Pn+1=0, as the truncations have ceased. Consequently, for n≥N,

By (2.34) and (2.35), for n≥N, ‖Xn−X′n‖≤ϵ. Since ϵ>0 may be arbitrarily small, it will be sufficient to prove X′n→x⋆.

4. To obtain convergence of X′n, we first examine ‖X′n+1−x⋆‖. For n≥N,

Now consider two cases of this expression. First, assume ‖X′n−x⋆‖≤η. In this case, using (2.33),

where B>0 is a constant depending only on R and ˉf. For ‖X′n−x⋆‖>η, using (2.33)

By (2.33),

Since ‖Xn−x⋆‖<r too, (2.32) and (2.39) yield the estimate

Thus, in this regime, using (2.33),

where A>0 is a constant depending only on δ.

Combining estimates (2.38) and (2.40), we can write for n≥N

5. We now show that ‖X′n−x⋆‖≤η i.o. The argument is by contradiction. Let M≥N be such that for all n≥M, ‖X′n−x⋆‖>η. For such n,

Using Assumption 4 and taking n→∞, we obtain a contradiction.

6. Finally, we prove convergence of X′n→x⋆. Since X′n∈Bη(x⋆) i.o., let

For n≥N′, we can then define

For all such n, φ(n)≤n, and X′φ(n)∈Bη(x⋆).

We claim that for n≥N′,

First, if n=φ(n), this trivially holds in (2.41). Suppose now that n>φ(n). Then for i=φ(n)+1,φ(n)+2,…n, ‖X′i−x⋆‖>η. Consequently,

As φ(n)→∞,

Since η may be arbitrarily small, we conclude that

completing the proof.

3.

Minimization of relative entropy

Recall from the introduction that our distribution of interest, μ, is posed on the Borel subsets of Hilbert space H. We assume that μ≪μ0, where μ0=N(m0,C0) is some reference Gaussian. Thus, we write

where Φν:X→R, X a Banach space, a subspace of H, of full measure with respect to μ0, a Gaussian on H, assumed to be continuous. Zμ=Eμ0[exp{−Φ(u)}]∈(0,∞) is the partition function ensuring we have a probability measure.

Let ν=N(m,C), be another Gaussian, equivalent to μ0, such that we can write

Assuming that ν≪μ, we can write

The assumption that ν≪μ implies that ν and μ are equivalent measures. As was proven in [16], if A is a set of Gaussian measures, closed under weak convergence, such that at least one element of A is absolutely continuous with respect to μ, then any minimizing sequence over A will have a weak subsequential limit.

If we assume, for this work, that C=C0, then, by the Cameron-Martin formula (see [9]),

Here, ⟨∙,∙⟩H1 and ‖∙‖H1 are the inner product and norms of the Cameron-Martin Hilbert space, denoted H1,

Convergence to the minimizer will be established in H1, and H1 will be the relevant Hilbert space in our application of Theorems 2.1 and 2.2 to this problem.

Letting ν0=N(0,C0) and v∼ν0, we can then rewrite (3.3) as

The Euler-Lagrange equation associated with (3.6), and the second variation, are:

3.1. Application of Robbins-Monro

In [15], it was suggested that rather than try to find a root of (3.7), the equation first be preconditioned by multiplying by C0,

and a root of this mapping is sought, instead. Defining

The Robbins-Monro formulation is then

with vn∼ν0, i.i.d.

We thus have

Theorem 3.1. Assume:

● There exists ν=N(m,C0)∼μ0 such that ν≪μ.

● Φ′μ and Φ″μ exist for all u∈H1.

● There exists m⋆, a local minimizer of J, such that J′(m⋆)=0.

● The mapping

is bounded on bounded subsets of H1.

● There exists a convex neighborhood U⋆ of m⋆ and a constant α>0, such that for all m∈U⋆, for all u∈H1,

Then, choosing an according to Assumption 4,

● If the subset U⋆ can be taken to be all of H1, for the expanding truncation algorithm, mn→m⋆ a.s. in H1.

● If the subset U⋆ is not all of H1, then, taking U1 to be a bounded (in H1) convex subset of U⋆, with m⋆∈U1, and U0 any subset of U1 such that there exist R0<R1 with

for the fixed truncation algorithm, mn→m⋆ a.s. in H1.

Proof. We split the proof into 2 steps:

1. By the assumptions of the theorem, we clearly satisfy Assumptions 1 and 4. To satisfy Assumption 3, we observe that

This is bounded on bounded subsets of H1.

2. Per the convexity assumption, (3.14), implies Assumption 2, since, by the mean value theorem in function spaces,

where ˜m is some intermediate point between m and m⋆. This completes the proof.

While condition (3.14) is sufficient to obtain convexity, other conditions are possible. For instance, suppose there is a convex open set U⋆ containing m⋆ and constant θ∈[0,1), such that for all m∈U⋆,

where λ1 is the principal eigenvalue of C0. Then this would also imply Assumption 2, since

We mention (3.15) as there may be cases, shown below, for which the operator Eν0[Φ″μ(v+m)] is obviously nonnegative.

4.

Examples

To apply the Robbins-Monro algorithm to the relative entropy minimization problem, the Φμ functional of interest must be examined. In this section we present a few examples, based on those presented in [15], and examine when the assumptions hold. The one outstanding assumption that we must make is that, a priori, μ0 is an equivalent measure to μ.

4.1. Scalar problem

Taking μ0=N(0,1), the standard unit Gaussian, let V:R→R be a smooth function such that

is a probability measure on R. For these scalar cases, we use x in place of v. In the above framework,

and ξ∼N(0,1)=ν0=μ0.

4.1.1. Globally convex case

Consider the case that

In this case

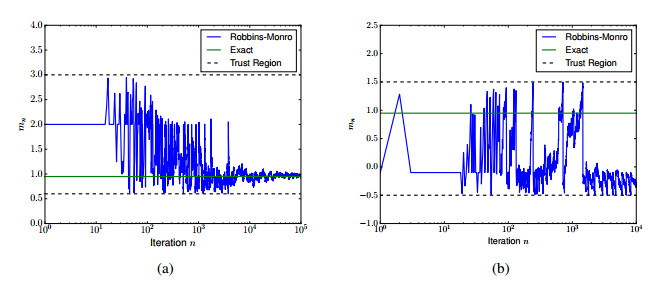

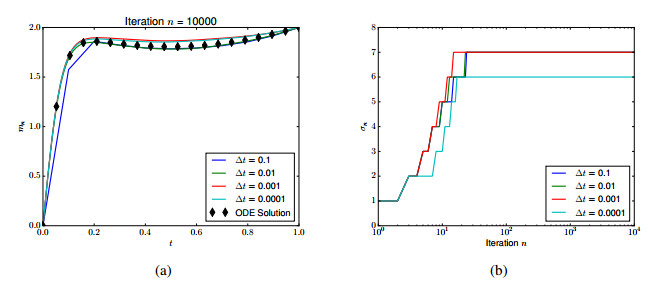

Since E[Φ″μ(x+ξ)]≥4ϵ−1, all of our assumptions are satisfied and the expanding truncation algorithm will converge to the unique root at x⋆=0 a.s. See Figure 1 for an example of the convergence at ϵ=0.1, Un=(−n−1,n+1), and always restarting at 0.5.

We refer to this as a "globally convex'' problem since R is globally convex about the minimizer.

4.1.2. Locally convex case

In contrast to the above problem, some mimizers are only "locally'' convex. Consider the case the double well potential

Now, the expressions for RM are

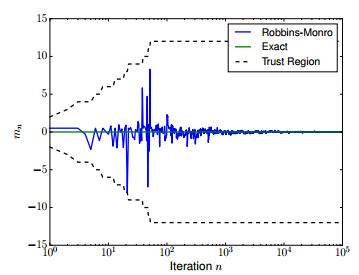

In this case, f(x) vanishes at 0 and ±√1−ϵ, and J″ changes sign from positive to negative when x enters (−√(1−ϵ)/3,√(1−ϵ)/3). We must therefore restrict to a fixed trust region if we want to ensure convergence to either of ±√1−ϵ.

We ran the problem at ϵ=0.1 in two cases. In the first case, U1=(0.6,3.0) and the process always restarts at 2. This guarantees convergence since the second variation will be strictly postive. In the second case, U1=(−0.5,1.5), and the process always restarts at −0.1. Now, the second variation can change sign. The results of these two experiments appear in Figure 2. For some random number sequences the algorithm still converged to √1−ϵ, even with the poor choice of trust region.

4.2. Path space problem

Take μ0=N(m0(t),C0), with

equipped with Dirichlet boundary conditions on H=L2(0,1).* In this case the Cameron-Martin space H1=H10(0,1), the standard Sobolev space equipped with the Dirichlet norm. Let us assume m0∈H1(0,1), taking values in Rd.

* This is the covariance of the standard unit Brownian bridge, Yt=Bt−tB1.

Consider the path space distribution on L2(0,1), induced by

where V:Rd→R is a smooth function. We assume that V is such that this probability distribution exists and that μ∼μ0, our reference measure.

We thus seek an Rd valued function m(t)∈H1(0,1) for our Gaussian approximation of μ, satisfying the boundary conditions

For simplicity, take m0=(1−t)m−+tm+, the linear interpolant between m±. As above, we work in the shifted coordinated x(t)=m(t)−m0(t)∈H10(0,1).

Given a path v(t)∈H10, by the Sobolev embedding, v is continuous with its L∞ norm controlled by its H1 norm. Also recall that for ξ∼N(0,C0), in the case of ξ(t)∈R,

Letting λ1=1/π2 be the ground state eigenvalue of C0,

The terms involving v+m0 in the integrand can be controlled by the L∞ norm, which in turn is controlled by the H1 norm, while the terms involving ξ can be integrated according to (4.7). As a mapping applied to x, this expression is bounded on bounded subsets of H1.

Minimizers will satisfy the ODE

4.3. Globally convex example

With regard to convexity about a minimizer, m⋆, if, for instance, V″ were pointwise positive definite, then the problem would satisfy (3.15), ensuring convergence. Consider the quartic potential V given by (4.2). In this case,

and

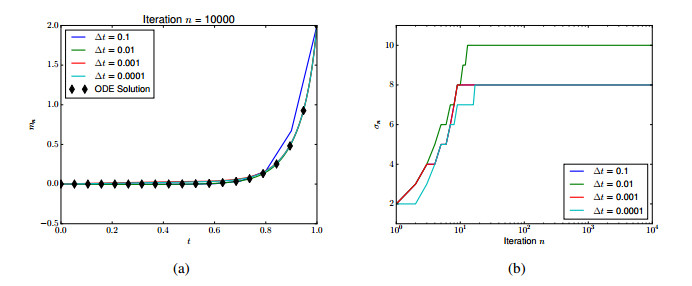

Since Φ″(v+m0+ξ)≥ϵ−1, we are guaranteed convergence using expanding trust regions. Taking ϵ=0.01, m−=0 and m+=2, this is illustrated in Figure 3, where we have also solved (4.8) by ODE methods for comparison. As trust regions, we take

and we always restart at the zero solution Figure 3 also shows robustness to discretization; the number of truncations is relatively insensitive to Δt.

4.4. Locally convex example

For many problems of interest, we do not have global convexity. Consider the double well potential (4.3), but in the case of paths,

Then,

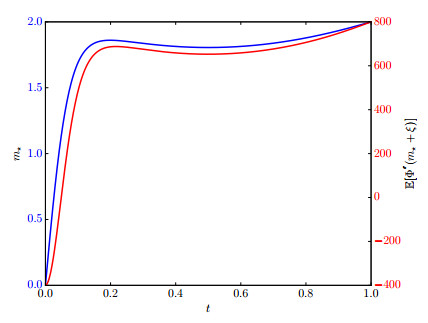

Here, we take m−=0, m+=2, and ϵ=0.01. We have plotted the numerically solved ODE in Figure 4. Also plotted is E[Φ″(v⋆+m0+ξ)]. Note that E[Φ″(v⋆+m0+ξ)] is not sign definite, becoming as small as −400. Since C0 has λ1=1/π2≈0.101, (3.15) cannot apply.

Discretizing the Schrödinger operator

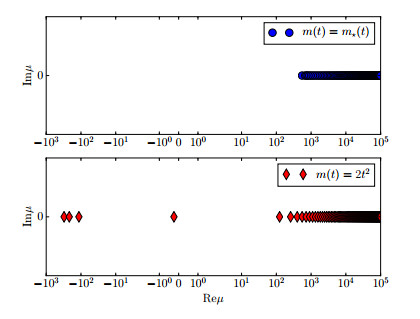

we numerically compute the eigenvalues. Plotted in Figure 5, we see that the minimal eigenvalue of J″(m⋆) is approximately μ1≈550. Therefore,

for all v in some neighborhood of v⋆. For an appropriately selected fixed trust region, the algorithm will converge.

However, we can show that the convexity condition is not global. Consider the path m(t)=2t2, which satisfies the boundary conditions. As shown in Figure 5, this path induces negative eigenvalues.

Despite this, we are still observe convergence. Using the fixed trust region

we obtain the results in Figure 6. Again, the convergence is robust to discretization.

5.

Discussion

We have shown that the Robbins-Monro algorithm, with both fixed and expanding trust regions, can be applied to Hilbert space valued problems, adapting the finite dimensional proof of [12]. We have also constructed sufficient conditions for which the relative entropy minimization problem fits within this framework.

One problem we did not address here was how to identify fixed trust regions. Indeed, that requires a tremendous amount of a priori information that is almost certainly not available. We interpret that result as a local convergence result that gives a theoretical basis for applying the algorithm. In practice, since the root is likely unknown, one might run some numerical experiments to identify a reasonable trust region, or just use expanding trust regions. The practitioner will find that the algorithm converges to a solution, though perhaps not the one originally envisioned. A more sophisticated analysis may address the convergence to a set of roots, while being agnostic as to which zero is found.

Another problem we did not address was how to optimize not just the mean, but also the covariance in the Gaussian. As discussed in [15], it is necessary to parameterize the covariance in some way, which will be application specific. Thus, while the form of the first variation of relative entropy with respect to the mean, (3.7), is quite generic, the corresponding expression for the covariance will be specific to the covariance parameterization. Additional constraints are also necessary to guarantee that the parameters always induce a covariance operator. We leave such specialization as future work.

Acknowledgments

This work was supported by US Department of Energy Award DE-SC0012733. This work was completed under US National Science Foundation Grant DMS-1818716. The authors would like to thank J. Lelong for helpful comments, along with anonymous reviewers whose reports significantly impacted our work.

Conflict of interest

The authors declare that there is no conflicts of interest in this paper.

DownLoad:

DownLoad: